It was yet another exciting week…

When Cloud or SaaS application performance starts impacting user productivity how do you go about troubleshooting? Performance can be extremely subjective… what is fast to some people is slow to others and vice versa. How do you even measure performance? Invariably people want to blame the network because that’s the simplest answer. However, it can take a lot of effort and due diligence to dig down and find the actual culprit.

In this specific case we had ~ 8,000 miles between the users and the server infrastructure. So I’m personally expecting additional challenges due to the extreme round trip times (220ms) and latency that may play some roll in any possible issue or issues.

Let’s try to frame the issue;

- Is the issue persistent or intermittent? Intermittent

- Is the issue occurring with any regularity? Yes, 11:00AM – 12:30PM local time daily

- Is the issue impacting every user or just specific users? Multiple users, not clear if every user is impacted but a majority of users

- Is there anything common among the impacted users? They are all using the same VPN and proxy server infrastructure, they are all located in the same country.

- When did the problem start? Users have been working for 3+ months without issue, but this problem is fresh within the past 2 weeks.

The last point is likely key… so what’s changed in the past 2 weeks that’s causing this issue? Let’s get to that later but those simple facts are key in driving your investigation.

We start with the simple baseline network tests;

- ping – good with minimal pack loss

- traceroute (mtr) – looks like pathways with multiple ISPs

- speed tests – generally good

- packet capture – in general looks good, some out of order packets, some dupe ACKs, these are likely the result of the ~ 8,000 miles between the endpoints.

In the baseline results there are no smoking guns but there are some suspect data points in there, although we need to remember that this isn’t a LAN based application. This is an Internet based application with 8,000 miles between the endpoints so there is going to be some noise in the packet trace.

Note: I’ve seen all sorts of interesting Internet issues since March 2020 when the pandemic lock-down first kicked off here in the US, and again recently at the beginning of September 2020 when the majority of US school students returned to remote learning. I observed a large number of my US users had better latency to our UK VPN gateways than to our local US VPN gateways. Ultimately we found a number of Internet peering points between the different Internet Service Providers (I’m being nice here and not naming names) were getting completely blasted and was adding 75-125ms to every packet. Eventually the providers addressed this problem with additional peering but it was a painful couple of weeks.

Now what we need are some additional data points that can be collected during the issue;

- HAR (HTTP Archive) from Chrome web browser collected from user experiencing issue – this was a key piece of data that helped move the issue forward

- packet capture – wasn’t able to be captured due to locked down computers

What can we do to monitor the performance of the cloud application?

- ping – We setup pings monitors from a number of data centers globally to monitor for basic availability

- curl – We setup some simple HTTP/HTTPS monitoring using cURL

- selenium – At the recommendation of the application provider we setup ThousandEyes and a transaction monitor to generate synthetic transactions by logging into the application and working through a few different functions which themselves have dependencies on external REST and SOAP APIs.

The application itself has a number of dependencies from external microservices, so initially we were concerned that these external services might be having performance issues themselves which might be impacting the application itself. So we had to setup additional monitoring to try and validate the performance of those REST and SOAP APIs during the reported timeframes.

This was my first foray into working with Selenium and ThousandEyes but I was able to kludge my way through the solution after about 2 days. I did run into a few problems with the application website using dynamic Class IDs but eventually I got some basic tests working properly. The solution itself worked fairly well… we had some decent “front door” statistics within hours and the synthetic transaction data gave us a good idea that the application was performing properly during the reported timeframes the users were experiencing issues.

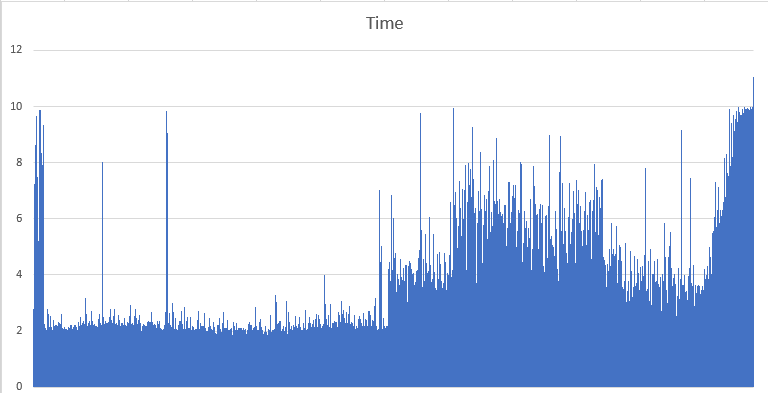

The application vendor was extremely helpful in examining the HAR data, and quickly determined from the HAR and their own internal logs that HTTP/HTTPS requests from the clients were being queued up and delayed from reaching their back-end infrastructure (Chrome only allows 6 concurrent connections to a single hostname). Within the HAR data the vendor observed some fairly aggressive custom polling within the application that was making unconditional Javascript calls every 2 seconds that resulted in a 12Kb data set being transferred to the client. The initial theory was that some Internet slowdown was causing the client requests to slowdown and eventually fall behind which then coupled with the unconditional Javascript calls and the six connection limit in Chrome led to an extremely poor user experience.

We eventually learned that the infrastructure the users were riding had recently switched Internet Service Providers two weeks earlier. Hmmm… hadn’t the issues started 2 weeks earlier? Yes they had! Ultimately we determined that there was enough occasionally packet loss and packet retransmissions over this new Internet link that it was impacting this specific application. The infrastructure was switched back to the original Internet link and the issue hasn’t been observed since.

My Thoughts?

In this specific case the intermittent packet loss and retransmissions were causing the application to fall behind in it’s communications with the backend infrastructure which was resulting in an extremely poor user experience. It’s relatively safe to argue that if the application code wasn’t as aggressive in it’s polling that it could potentially “tolerate” a certain amount of packet loss and retransmissions.

I personally believe as a network engineer it’s invaluable to learn why something doesn’t work instead of just accepting that it doesn’t work. Inevitably there will be things that we can’t explain but I’m a huge advocate of spending the effort to make sure you understand the vast majority, it’s really the only way you’ll make the environment around you better and ultimately more resilient.

Cheers!